emno: All-in-one vector database

Meet emno: a vector database solution designed for modern AI apps. Learn how it simplifies vector data management and supports scalable AI development

Founder | Product Manager | Builder

If you've only recently come across the term "vector database," you're not alone. While vector databases have been around for about a decade, they've just recently captured the interest of the broader developer community.

Today, we're experiencing a technological boom, with groundbreaking applications being developed daily using advanced AI tools. Many of these applications rely heavily on vector databases.

Recipe of a Vector database

Vector databases are specialized databases optimized for storing and querying complex, multi-dimensional data, making them a key component in modern data-intensive applications, especially those involving AI and machine learning. The key features of vector databases are:

Vector Data: In the context of a vector database, "vector" usually refers to a mathematical or geometric representation of data, often in the form of a list of numbers (like coordinates or attributes). This is different from traditional databases that store data in tables with rows and columns.

Efficiency with Complex Data: Vector databases are particularly good at handling complex, multi-dimensional data, which is common in fields like machine learning, artificial intelligence, and image processing.

Similarity Searches: Vector databases can perform similarity searches. This means they can quickly find data points that are "similar" or "close" to a given query point in the vector space. This feature is especially useful for tasks like finding similar images, recommending products, or searching for similar text documents.

Scalability and Performance: Vector databases are designed to be scalable, performant, and efficiently handle large volumes of data and complex queries.

And that is it. Every vector database aims to solve these core feature requirements.

Challenges

Vector databases have become an integral component of the rapidly growing AI landscape. Although they have been evolving to meet modern demands, vector databases have a long way to go to catch up in terms of user-friendliness, developer accessibility, and enterprise readiness when compared to traditional databases.

Vector databases are still evolving

Nearly all vector databases only support "basic" form of filtering in addition to vector operations. They don’t support sophisticated queries like SQL and don’t integrate with other systems. Vector databases lack in:

Tooling and Ecosystem:

Traditional Databases: Benefit from a mature ecosystem, including advanced tools for monitoring, backup, replication, and many third-party integrations.

Vector Databases: Tooling is improving but is less comprehensive than that for RDBMS. Integration with existing technology stacks is also an area of ongoing development.

Stability and Feature Set:

RDBMS: Known for stability and comprehensive features covering various aspects of database management.

Vector Databases: Focused on performance and efficiency in handling specific types of queries and data structures.

And that is not all; in vector databases, you need to independently verify/build Access Control, Resiliency Plans, Transaction control, ACID isolation, and even CRUD support.

A lack of awareness of these limitations can result in costly problems in real business.

Multi-step Loading/Querying

All vector databases are focused on how to store and retrieve vectorized embeddings efficiently. None of the databases offers a solution for managing the embedding process, e.g., generating the vectors to perform the operations of loading/saving data and querying it.

AI applications that need to query a vector database need two processes

Populating the vector database with the vectors

Querying the vector database

These processes are multi-step, similar to any other interaction with the vector database, involving:

chunking (breaking down large text into smaller parts)

converting each chunk into a vector (using an embedding model)

performing operations on the database (this is where the actual database comes into the picture)

Creating and Storing Vectors

Querying Vectors

Tight-coupling with embedding models

Another issue that teams face is that the vector data is tightly coupled with their embedding models.

Example:

If you use OpenAI's text-embedding-ada-002 embedding model, you get back vectors of dimension 1536. Every time you load or query vector data, you need to ensure the conversion happens using text-embedding-ada-002 embedding model. You are now bound to text-embedding-ada-002 embedding model.

If you decide to change your embedding model to, say Cohere's embed-english-light-v3.0 (which has a dimension of 384), you need to:

re-index/re-create ALL your vectors using Cohere's

embed-english-light-v3.0embedding modeland you are (again) bound to this new model every time you load or query vector data

Re-indexing is a time-consuming, costly, and service-disrupting process.

Costly

Imagine you are building an application to store the Knowledge Base of your mid-size e-commerce business company. You decide on your stack, which might look something like this:

Pinecone for Vector database

OpenAI's

text-embedding-ada-002embedding modelReact to build out Knowledge Base web app

You have:

1 Million pages, docs, or pdfs, (containing all the details of your e-commerce products), each with 3,000 characters of text (~800 tokens)

This translates to roughly 1 Million vectors that need to be stored.

With OpenAIs embeddings model - each vector has 1536 dimensions. You may also want to save the vector and the text together. So:

Each vector + text storage will take: (1536 x 4) + 3000 = ~ 9KB

Total: 1M x 9KB = 9GB of storage space

What you will pay:

[One time - to OpenAI] cost for generating the embeddings/vector: ~ $350

[Monthly - to Pinecone] a typical 2 Pinecone x8 pod: ~ $1000

Additionally,

Any time a change in your data happens, you pay for the generation of embeddings to OpenAI.

If you receive a lot of queries against this data, you have additional recurring expenses:

You pay OpenAI to convert to embeddings for each query text,

You pay Pinecone for additional queries against your vector data

If you are unhappy with the speed, add more replicas and pay more to Pinecone.

And, if you decide to move away from OpenAI embeddings to something else, it takes at least one day to re-initialize the same 1 Million vectors.

- This switch happens more often when teams experiment to see which model gives them better results.

In reality, the cost is much higher than this. Enterprises with larger corpora can expect to pay 50 to 100 times more and hire more engineers to manage this process. 🤯

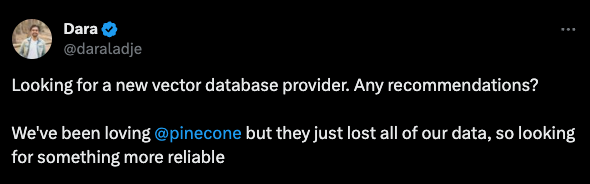

Developer's Dilemma

Working with vector databases often brings up a bunch of tricky questions:

Can we switch our embedding models and experiment easily?

How can we keep our costs in check?

What about making sure we don't lose our data?

And let's not forget about making the whole process smooth and efficient.

It's clear that we need a vector database solution that's not just powerful but also easy to use and easy on the wallet.

Introducing - emno

An all-in-one vector database - emno that takes on the heavy lifting so we can focus on building the next-gen AI applications. emno redefines vector database interactions, focusing on embedding model management and streamlining overall processes. It shifts the heavier tasks from users and engineers to the database itself.

Simplified Vector Management

Creating and Storing Vectors

emno automates text segmentation and vector transformation, efficiently storing results in the vector database to save time and costs.

Querying Vectors

Input your query text, and emno handles the rest – from conversion to quick, accurate retrieval.

Cost-efficient

No complicated pods or machine setups. All vectors are stored and retrieved using high-performing, high-availability, edge-hosted machines. emno scales your machines as you grow, and its pricing is based on how many vectors are stored in it. Simple.

User-Friendly APIs and No-Code Options

With easy-to-use SDKs and a Bubble plugin, emno is accessible to developers and no-code enthusiasts.

Conclusion

In the fast-paced world of AI applications, managing vector data is crucial but often challenging. emno streamlines this process, offering a user-friendly, cost-efficient platform for all your vector needs.

Ready to see the difference emno can make in your AI development journey? Try emno today – it's free to start.